Claned help you create an engaging course that delivers expected learning outcome.

We remain one of the most awarded EdTech products on the market

The Claned model combines 3 elements essential to building an effective and impactful online learning experience. All three of these elements are great for your learning program when mastered on their own. But they’re downright incredible when you can get them to work together, on the same team, as part of the same solution.

And that’s what the Claned model represents: an online learning solution that is whole and complete — with all three elements not just fitting together but feeding into each other.

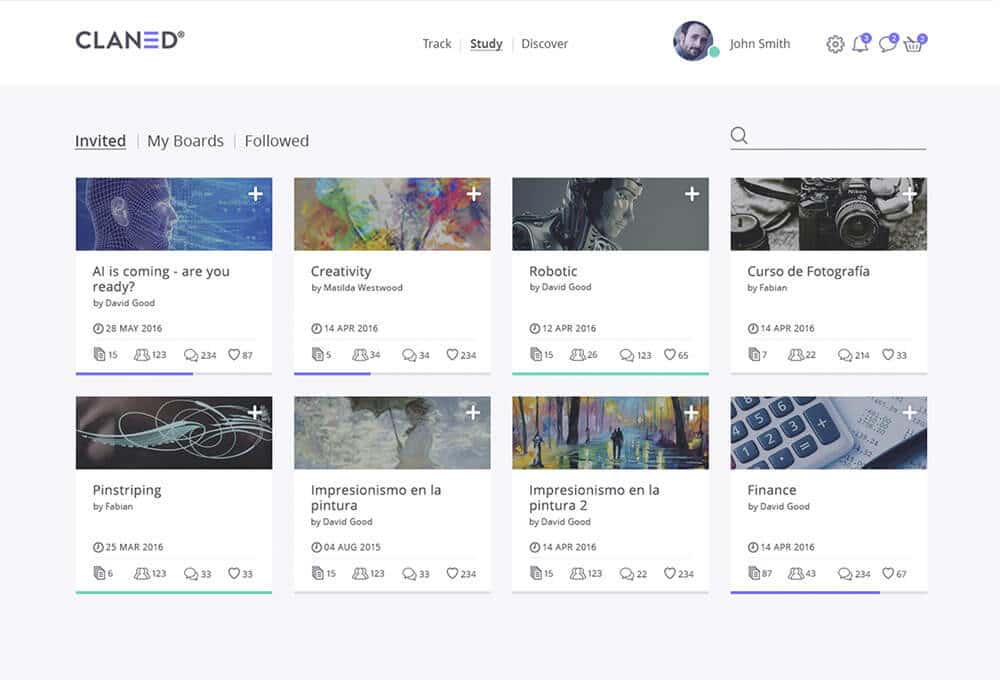

Creating your own courses in Claned is effortless. You can use any type of digital learning materials you want: webinars, videos, PDFs, VR games, anything. Adding your existing learning materials to our ready-made course templates makes creating courses a breeze.

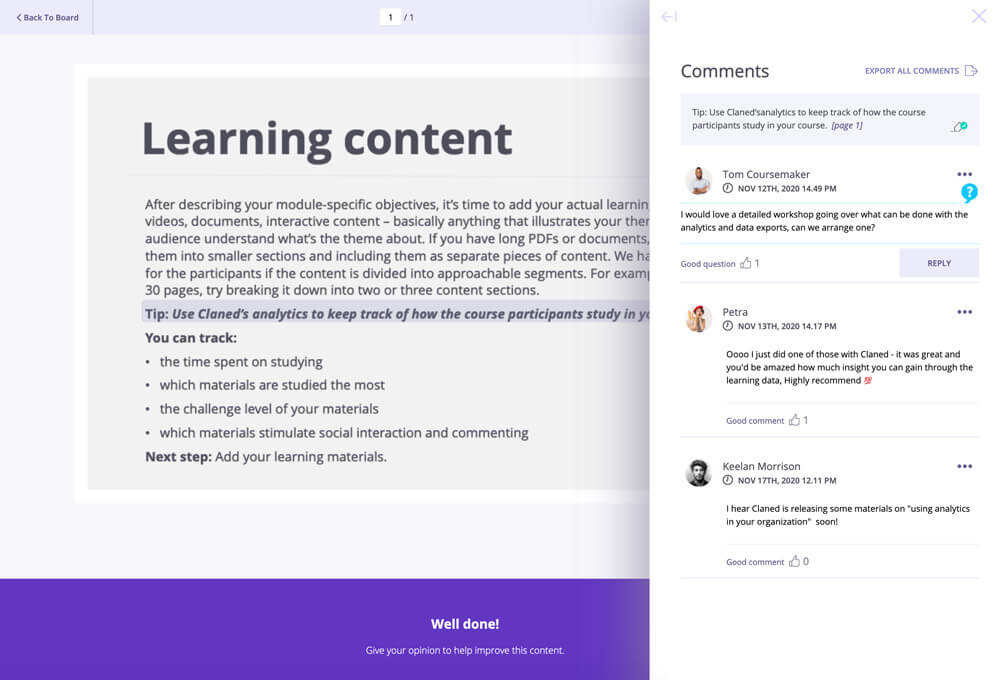

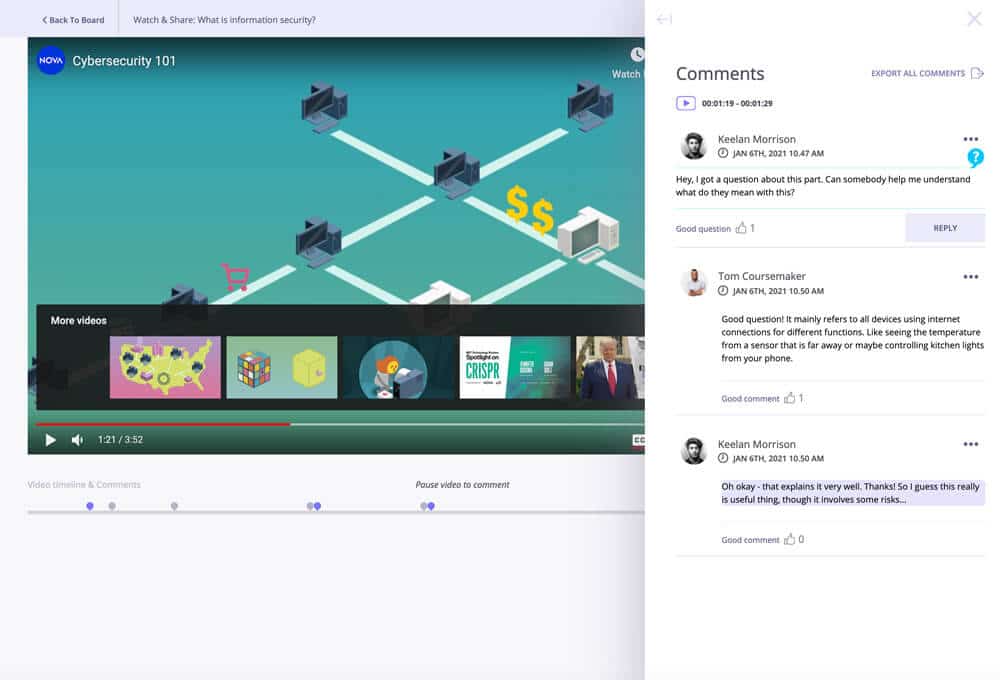

All learning materials will become interactive when they are added to Claned. For example, when a learner is going through a PDF, they can highlight any part of the text and post a public comment about it. This way the actual discussions can happen in context inside the materials instead of getting lost in a separate message board. The same goes for videos and other materials.

The Claned online learning platform encourages learners to collaborate and interact. In a similar way to using social media, course participants can post comments, discuss issues and share notes. Studies have shown that learning together increases motivation and promotes better results.

We offer a white label option for our online learning platform. This means that the platform will be customised to reflect your branding. You can have your logos, visuals, a custom URL and your own log in page. Making it clear to your learners that they are using your service.

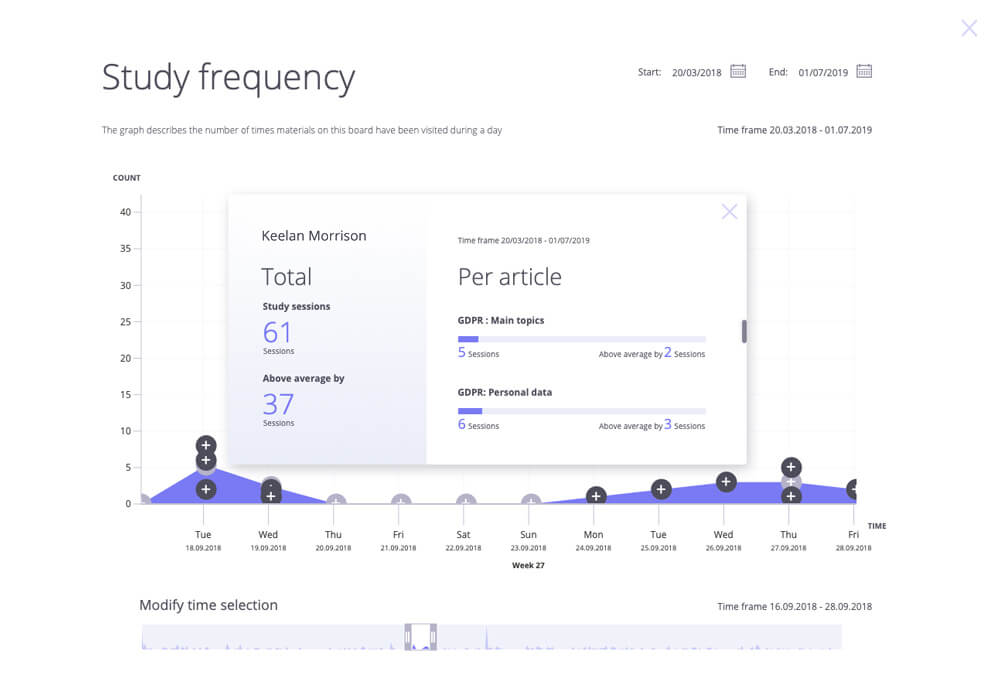

Claned has a track view that lets teachers easily follow their learners’ progress. With one click, you can see what materials each learner has completed, how much time they have spent on the course and what materials they might be struggling with. This lets you help the learners who need it and prevent dropouts.

Track learners’ progress | Prevent dropouts

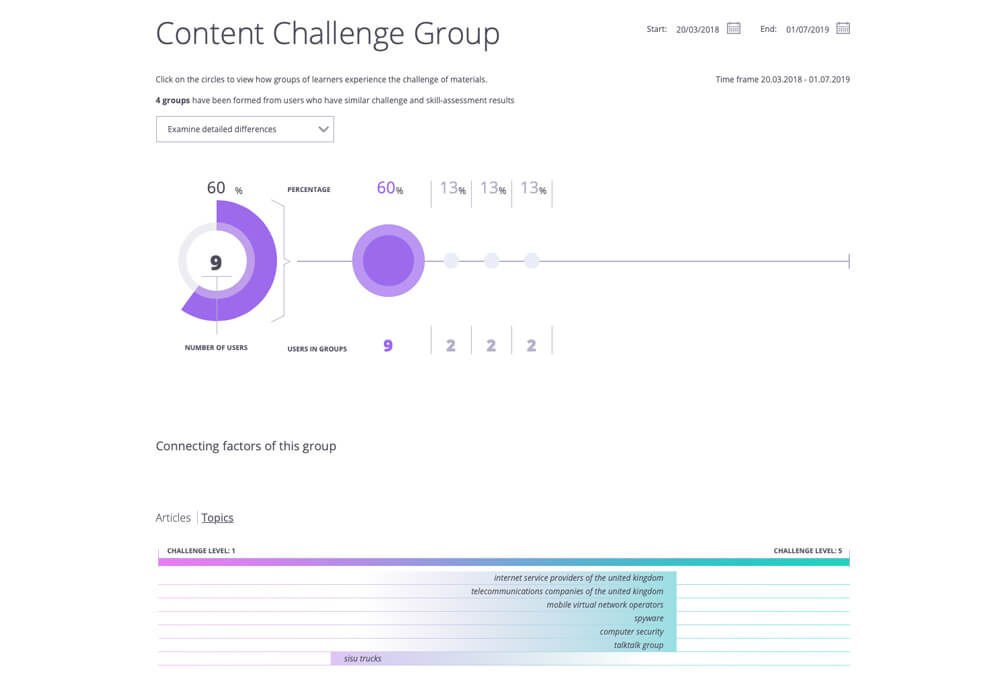

Claned automatically collects data from every interaction that happens in the online learning platform. The data has many uses from identifying learners at risk of dropping out to improving specific areas of your courses.

Most importantly, the data will show if your teaching is actually effective and the kind of impact it has on your organization KPIs and how you can improve your courses.

Data gathering | Data analysis

The Claned LMS (learning management system) is hosted on Microsoft Azure cloud service, and it uses a REST API to communicate with your existing systems.

Setting Claned up is not an IT project. There’s no need for on-site visits. Our LMS is a plug-and-play service and can be accessed anywhere around the world and at any time. When you want to try it out, Claned is ready to rock in minutes.

Cloud-based LMS | REST API | WebHooks | Ready to use in minutes

Claned Group Oy Ab

Konepajankuja 1

00510 Helsinki

sales@claned.com

Business ID: 2422442-0

© 2023 Claned. All rights reserved.